Robot vision with AI

Pharma

AI vision supports robots with bag handling

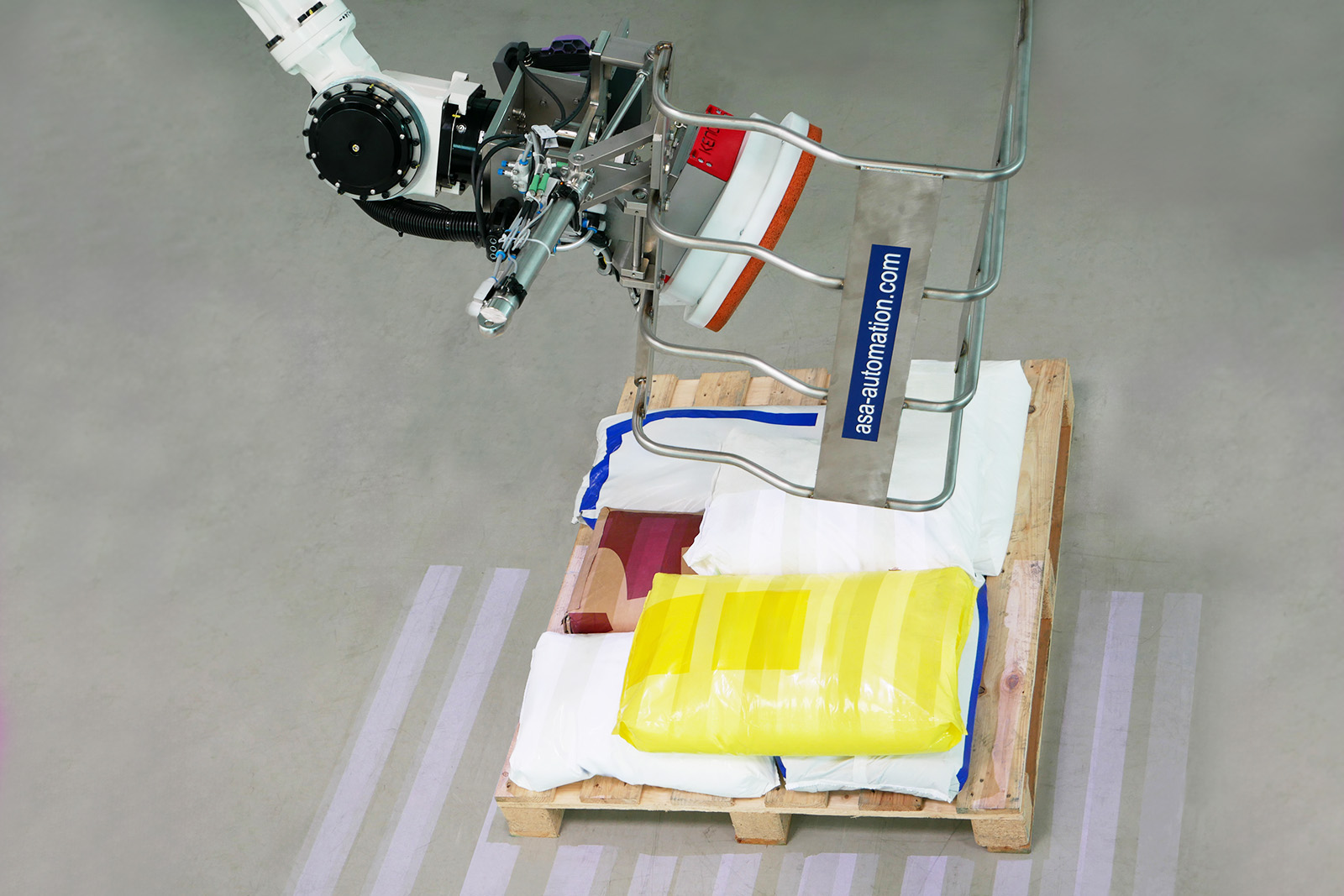

In an internationally renowned company in the chemical and pharmaceutical industry, the supply of chemical substances for processing in the downstream plant was organised manually for a long time: Employees had to lift bags weighing up to 25 kg from pallets and prepare the contents for the subsequent production steps. This process was not a good solution from both an ergonomic and an economic point of view.

The fact that the contents of the sacks are predominantly classified as hazardous goods led to the decision to automate this part of the system as far as possible and to commission ASA Automation with the solution. Vision On Line GmbH, which specialises in image processing-based automation solutions, was brought in as a partner.

Seventh axis for more flexibility

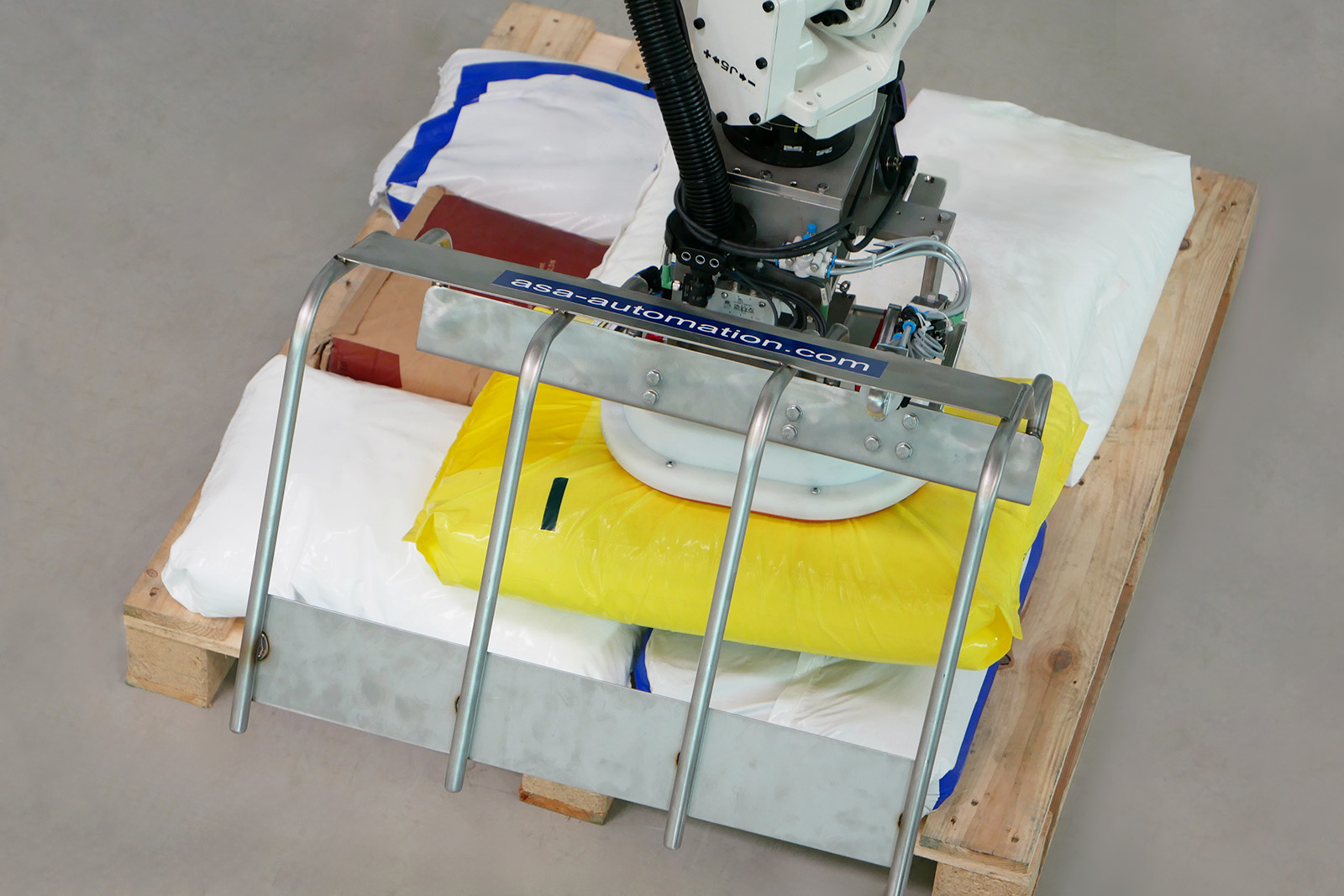

The mechanical part of the system was realised using a six-axis robot with an additional seventh axis for the travel path in the X direction. This setup ensures that the pallets, which are positioned in a defined location via corresponding conveyor elements, and the bagged goods lying on them are within reach of the specially developed vacuum suction gripper and can be fed to the downstream system in the exact position.

Gripping accuracy is crucial

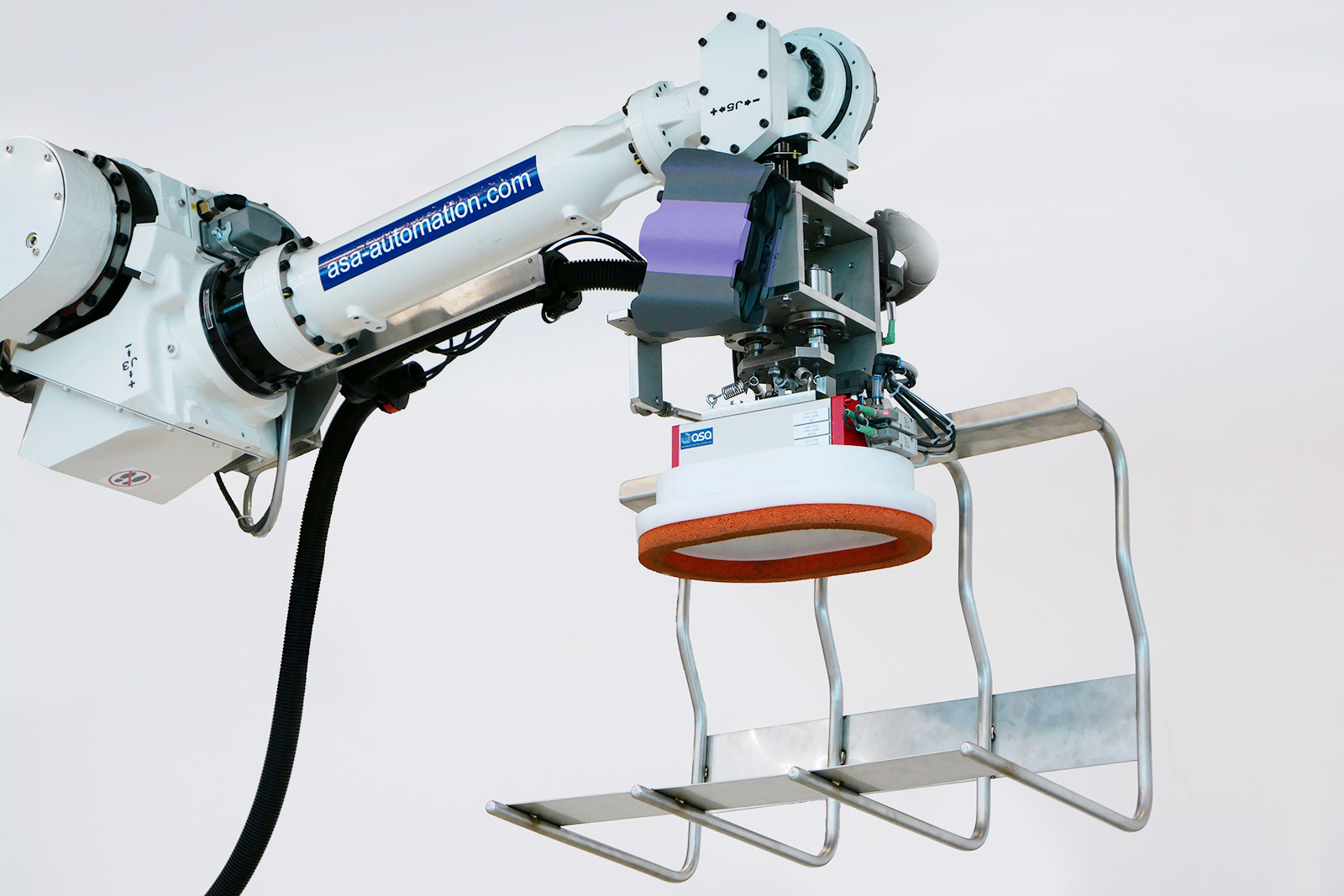

The picking of the bags from the staging position on a pallet was realised with a vacuum suction pad developed specifically for the application. This prevents the bags from sagging to varying degrees, depending on their contents and properties, and therefore guarantees reliable product handling. A high degree of accuracy was also required when determining the exact gripping positions so that the bags, which are not picked up exactly in the centre, cannot tear open when being lifted or during the subsequent movement.

Image processing with AI

Due to the geometric variance of the sacks, their positions and the different materials, conventional image processing methods for recognising the object positions were ruled out as a possible solution. The 3D picking solution EyeT+ Flex was therefore used for the required exact determination of the gripping point, as it covers the possibility of teaching objects with the help of artificial intelligence in addition to other tools.

The eye of the system is a camera installed on the robot arm, which carries out two 3D scans of the pallets filled with sacks. The reason for this is the small distance between the camera and the objects, which resulted from the low room height and the relatively high stack height. Wide-angle images in just one scan were not feasible due to the distortion and the use of a height sensor was also out of the question, as there was a risk of incorrectly measuring the object height at lower-lying edges of the sacks. This could have led to collisions between the gripper and the sacks. However, this difficulty could also be overcome by taking two images with the camera and combining the image data using suitable software.